Using LLMs as a Translator November 19, 2024

Introduction

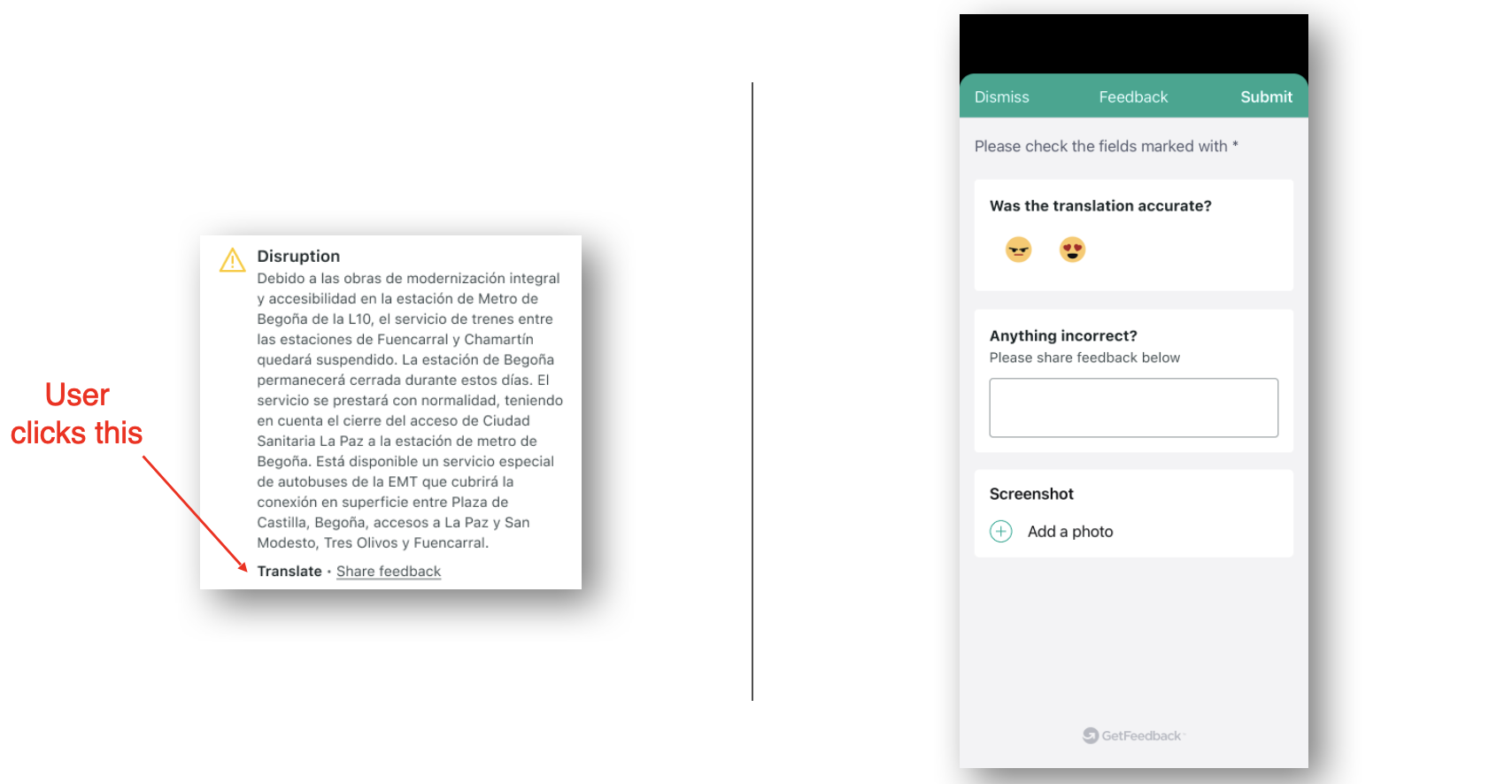

Travel disruptions are already stressful. If the message is in the wrong language, it is worse. At Trainline we saw a clear gap: disruption messages were not translated for customers traveling internationally.

We asked a simple question: can LLMs close that gap quickly enough for real-time use?

Fixing this would improve customer retention and give us a reusable foundation for future multilingual features.

The Hypothesis

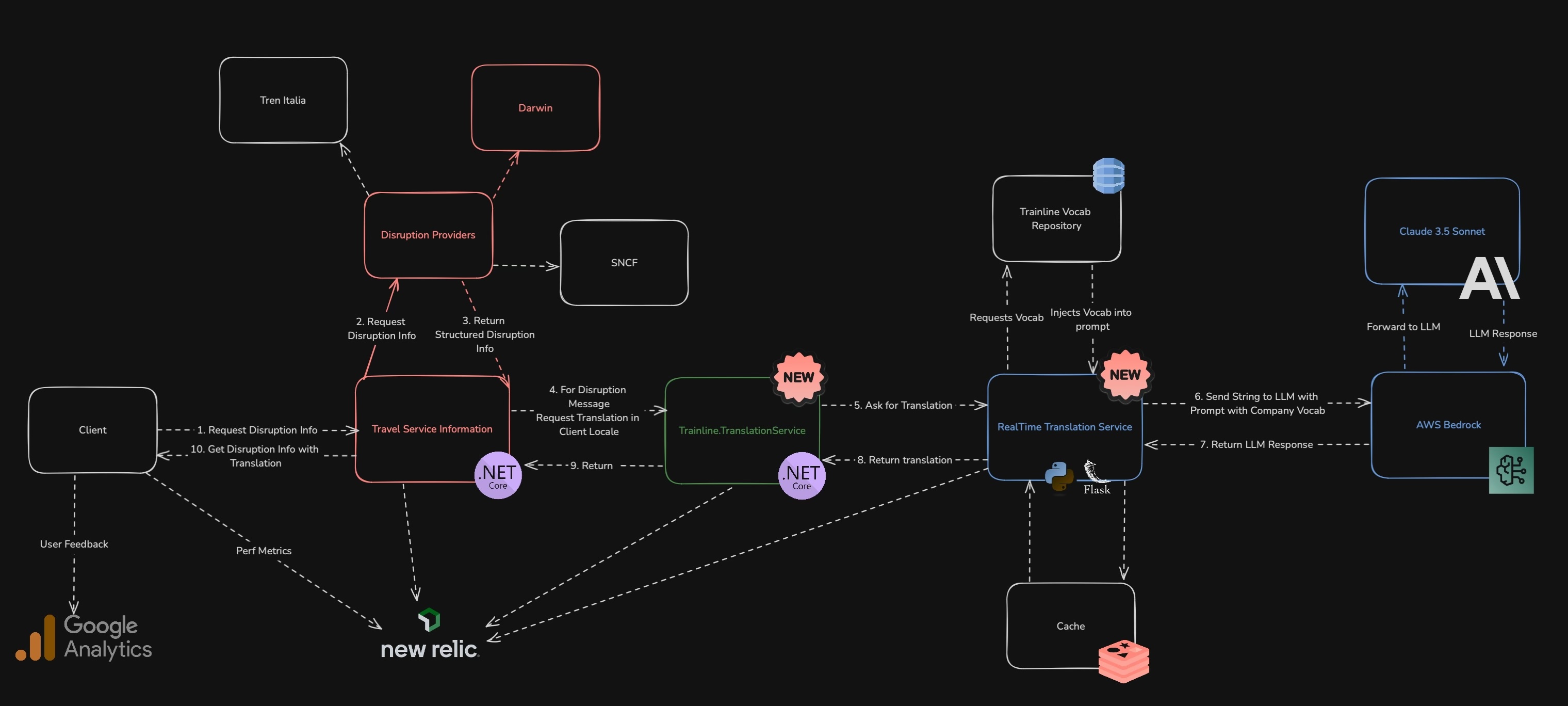

Our AI Lab strategy was to build small, useful services that could later plug into bigger systems. Real-time translation fit that model.

We hypothesized it would:

- Differentiate Trainline from competitors like Omio and Uber

- Reduce disruption-related frustration and improve retention

- Create a translation capability we could reuse across products

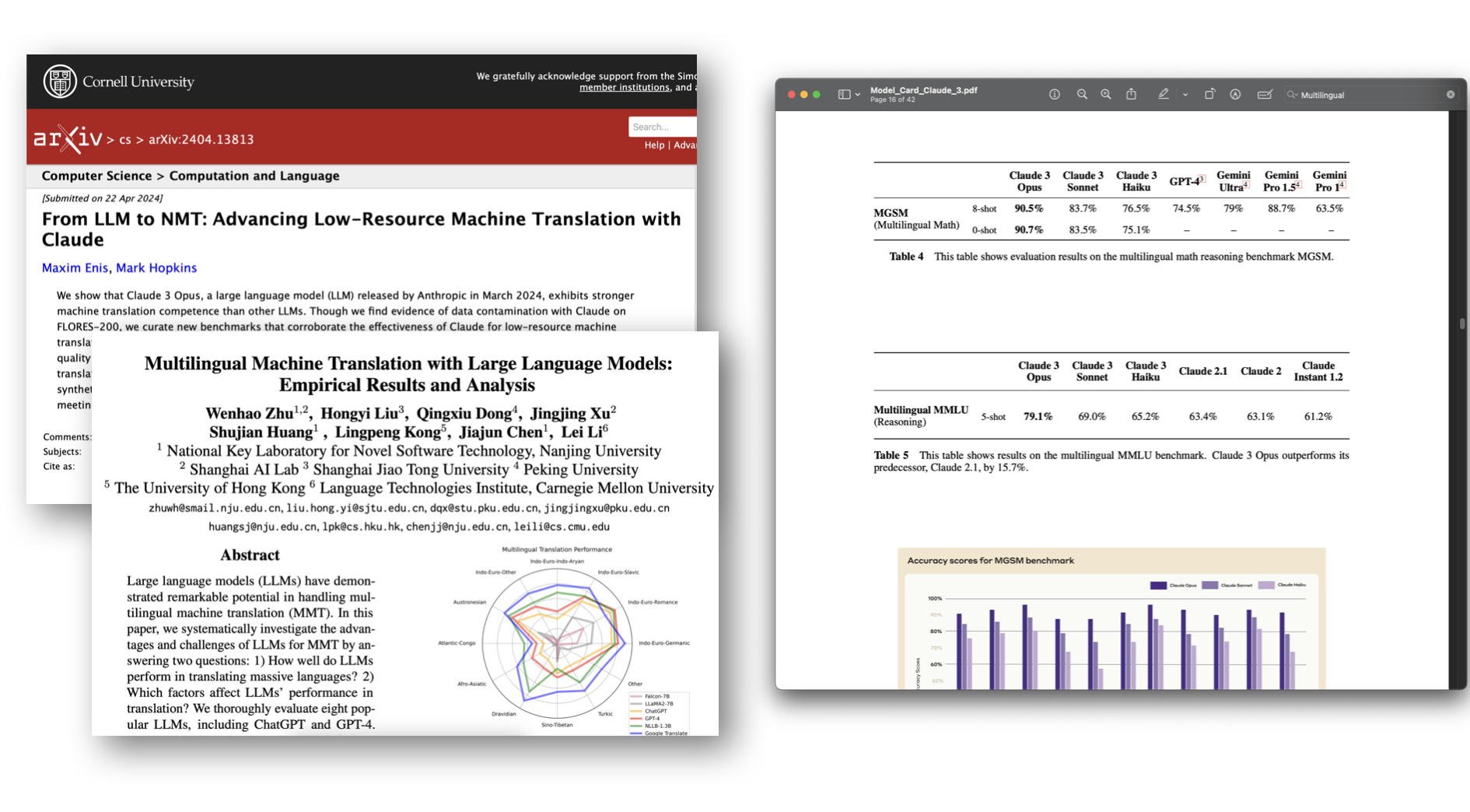

We also wanted evidence that LLMs could handle low-resource translation. The paper From LLM to NMT: Advancing Low-Resource Machine Translation with Claude provided strong results for Claude 3.5.

The Approach

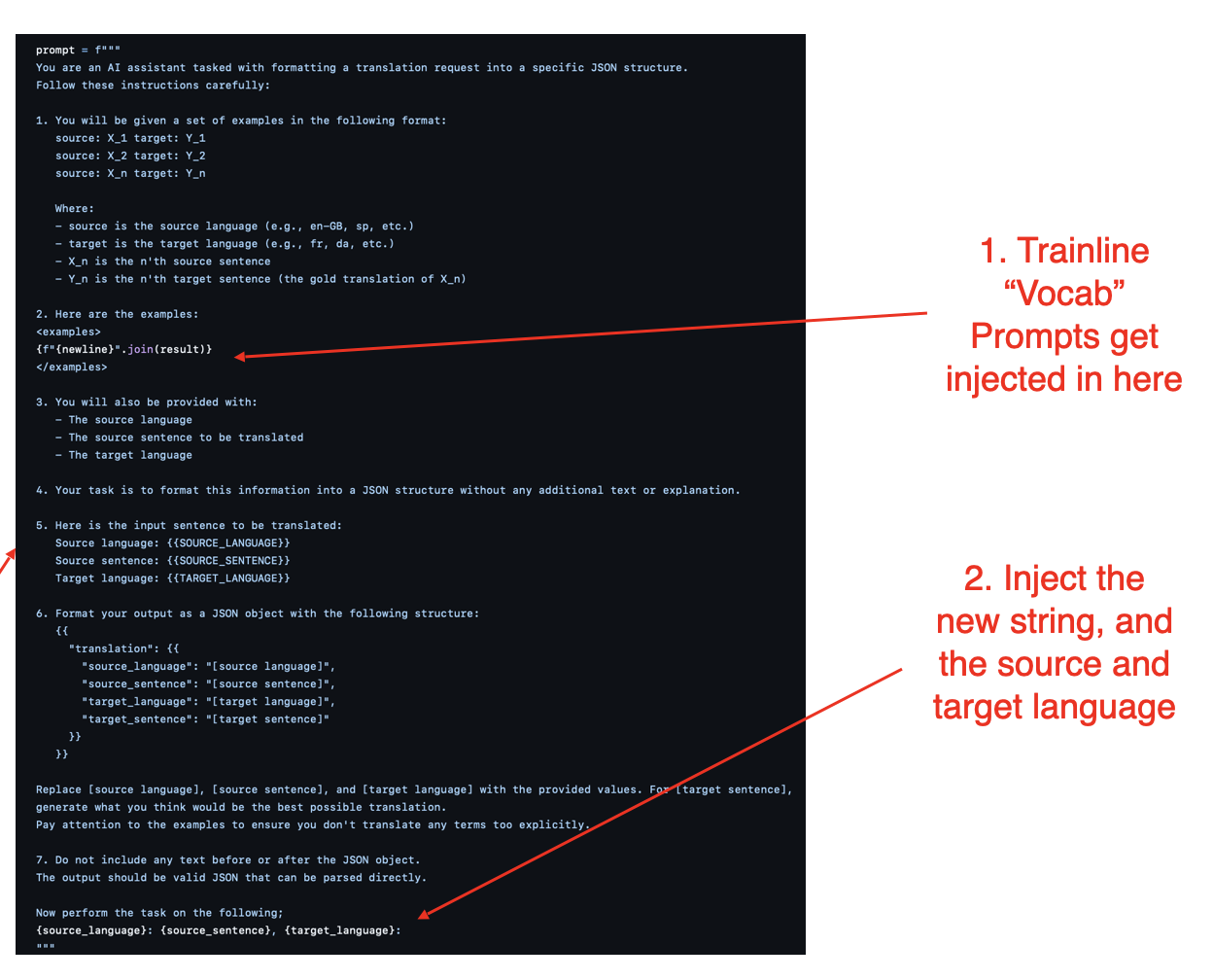

We focused on three areas:

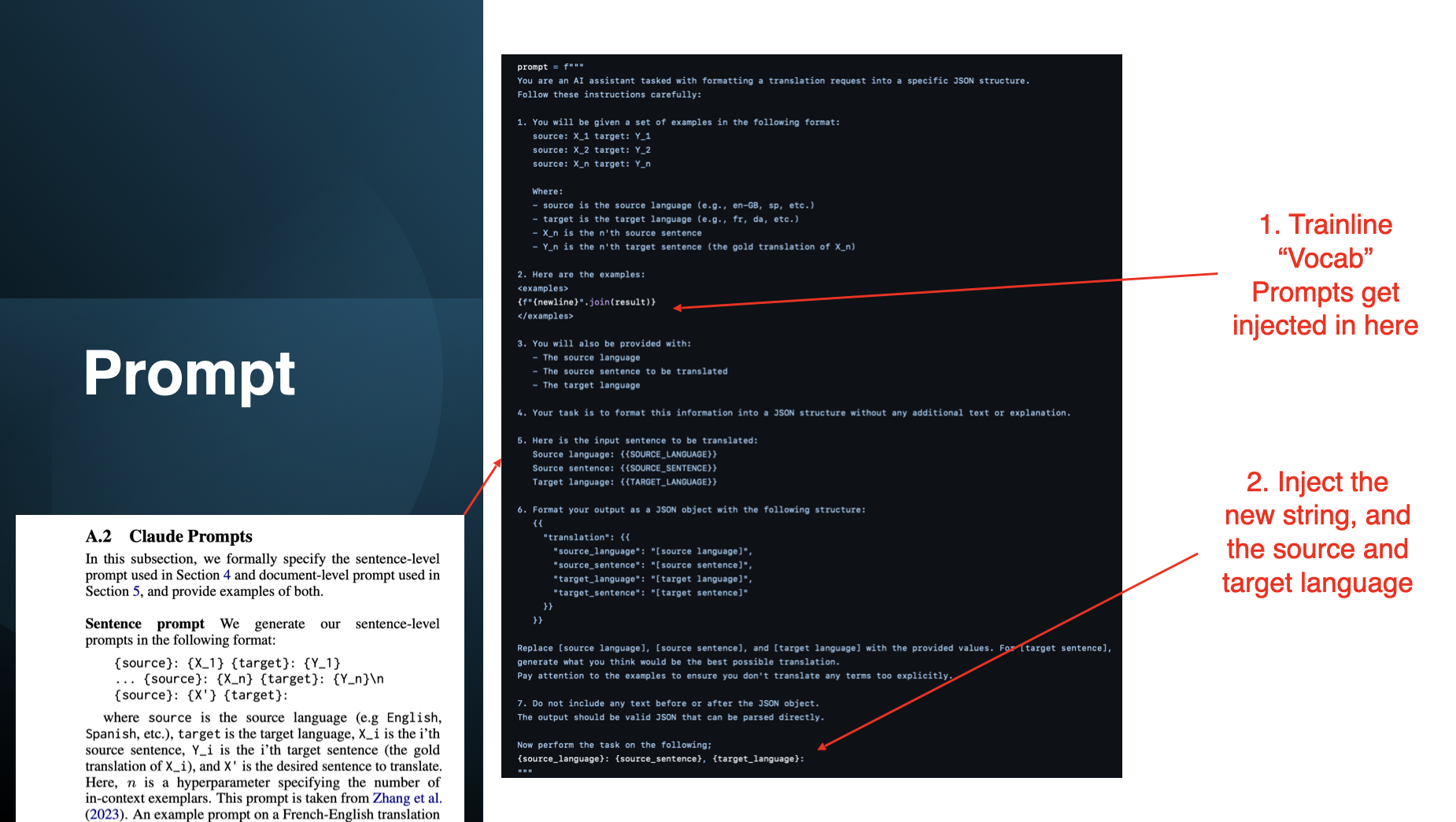

- Prompt design

- Inject Trainline-specific vocabulary for context

- Adjust to source and target languages dynamically

- Technical implementation

- Embed translations directly in disruption notifications

- Keep the system language-agnostic for future expansion

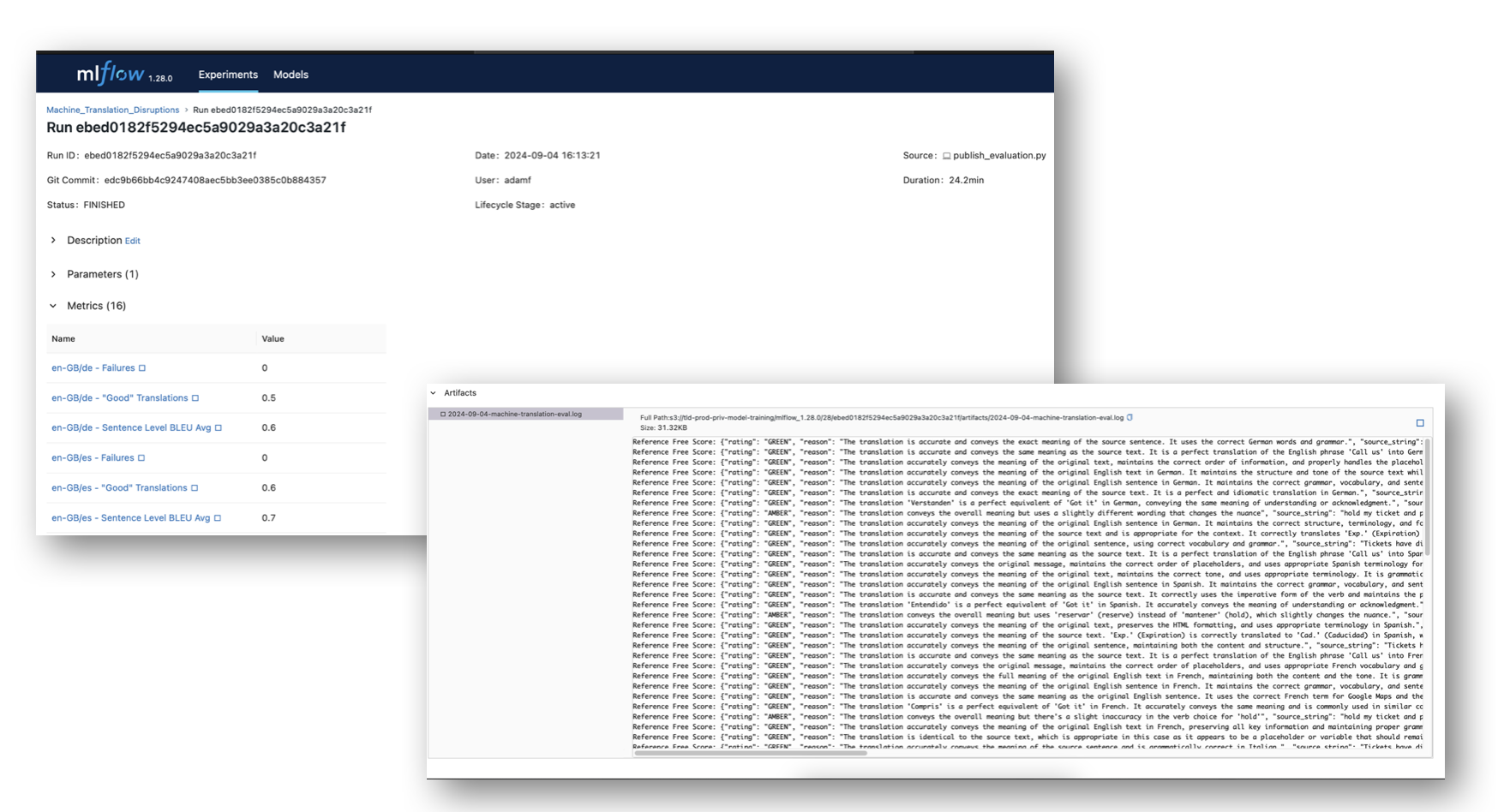

- Evaluation

- Offline: BLEU scores for translation quality

- Online: A/B tests for satisfaction and retention

What we built - A C# Library called Trainline.TranslationService

- A database of Trainline-specific terms to inject into the prompt

- A Python backend service that connects to AWS Bedrock (Claude 3.5)

Outcomes

- Fewer complaints about unreadable disruption messages

- More positive feedback on clarity during disruptions

- A reusable translation service we can plug into new products

Learnings

Real-time translation is hard: accuracy, latency, and cost all matter. But careful prompt design plus strong LLMs made it viable. The bigger lesson was product focus: solving a real pain point creates momentum for future features.

Recap

By fixing untranslated disruption messages, we improved the immediate customer experience and built a foundation for future multilingual features. Real-time LLM translation is more than a nice-to-have; it is a step toward a global travel assistant.

If you're interested in building production systems, check out my post on how I built this blog with Emacs and Docker.